Introduction

Performance appraisal is viewed as a process through which organisations identify, measure, and improve the employees’ execution of roles (Augustine 2009). This implies that before initiating the process of improving the performance of the employees, the involved department must identify the various roles that will be considered. After that consideration, the present performance level should be measured in order to identify the weak as well as the strong points of the pertinent personnel. Having measured the performance level, the organisations are capable of specifying ways of developing the way in which employees execute their roles. In order to succeed in the entire process it is crucial for the organisation to engage in thorough data collection that helps to identify the critical, behavioural, and professional proficiencies. After determining these competencies, the organisation can easily identify the capabilities that should be strengthened and the weaknesses that must be eliminated (Berg 2009). Moreover, the evaluation is the basis of determining the people who should be rewarded and promoted to higher ranks in the organisation due to their extemporary performance. However, it is very important to understand that performance appraisal is neither an impromptu enterprise nor a spontaneous undertaking that serves as a confrontational tool (Berg 2009). Instead, it is a continuous process or an ongoing activity that should be conducted on a real-time basis. Although it is such an important activity, it is extremely sensitive since it is affected by inherent emotions, diverse views, and resistances that make it complex. This research seeks to identify the various aspects of performance appraisal, including the question of whether there is a correlation between employees’ performance and attitude, and whether the managers’ competence when conducting the appraisal affects the workers’ capability to execute roles. Additionally, it seeks to determine whether the accuracy of the methods employed during the process of rating affects the employees’ attitude towards performance appraisal.

Research questions

From the literature review that has been conducted, it is clear that there are research questions that should be considered in this research study. These research questions are related to the attitudes and perceptions of employees toward performance appraisal, as shown below.

- Is there a relationship between the employee work experience and his/her attitudes toward performance appraisal?

- Does the manager’s competence in the performance appraisal system affect the employee attitudes toward performance appraisal?

- Does the accuracy of rating affect the employee attitudes toward performance appraisal?

The first research question is concerned with identifying whether the work experiences of workers affect the perceptions towards performance appraisal. It is stipulated to allow a study that seeks to determine whether the employees’ working conditions and their level of skills instil fear of performance appraisals.

The second research question touches on the issue of manager’s competence. Essentially, this question studies the effect of managers’ capabilities when conducting performance appraisal. In the literature review, some researchers argued that the incapability of managers is a fundamental hindrance to the effectiveness of performance appraisals. If the appraisal is faulty, the ratings might lead to promotion of people who are unworthy. This is a dangerous undertaking that can hinder the success of the organisation. As a result, it is important to discuss how the training and assessment capabilities affect the employees’ trust towards the supervisors. Accordingly, the finding will be used as the rationales of conducting truthful, realistic, reliable appraisals that are consistent to the organisational objectives rather than personal desires.

The third research question seeks information about the effect of accuracy on the employees’ perceptions toward performance appraisal. Since the performance appraisals are meant to inform the employees about their performance level, it is crucial for the supervisors to conduct an accurate assessment that is not biased. As such, this research question initiates a study that will help to determine how the aspects of biasness and false assessment can affect the trust of the employees and the dependability of the entire undertaking. In addition, it seeks to explore the importance of avoiding judgmental assessment or the use of incorrect parameters during evaluation.

Literature review on questionnaire design

Background information

The questionnaire has been designed through an involving process of considerations. This process has involved the formulation of queries that will help to answer the three research questions that have been presented in the previous paragraph. Also, it has involved the consultation of literature concerning the appropriate aspects that should be included and the justifications for their use.

Owing to the need to explore the employees’ attitudes towards the performance appraisal system, a 22-item questionnaire was developed to gather the one-time data from the respondents (employees). The questionnaire was divided into four sections: performance appraisal goals, accuracy of rating, overall satisfaction, and personal data.

This section discusses and develops the questionnaire design of the underlying study as well as the justifications used by the researcher in selecting the various research methods and approaches used to design the questionnaire.

Choice of quantitative approach

The quantitative approach has been used in this research in order to use the strengths seen by the philosophy of positivism. A quantitative research approach has been defined in the literature “as a type of empirical research into a social phenomenon or human problem, testing a theory consisting of variables which are measured with numbers and analysed with statistics in order to determine if the theory explains or predicts phenomena of interest” (Yilmaz 2013, p. 311). Drawing from this definition, a quantitative research approach is justifiable in the present study as it helps the researcher to use numerical items developed in the questionnaire to identify and measure the various aspects of performance appraisal and their effect on employees’ performance and attitudes.

Additionally, the quantitative approach allows the researcher to reduce the various performance appraisal aspects to numerical values that can then used to undertake comprehensive statistical analysis with the view to determining the relationship between the variables of interest to the study in terms of generalizable causal effects (Gelo, Braakmann, & Benetka 2008). For example, the quantitative approach makes it possible for the researcher to use numerical values contained in the standardised questionnaire to objectively, rigorously, and systematically gather evidence on the relationship between the employee work experience and his/her attitudes toward performance appraisal, and also to run statistical procedures to objectively determine the direction of the relationship and how it affects other important variables of the study.

Lastly, owing to the fact that the present study aims to identify the various aspects of performance appraisal such as employees’ attitudes, managers’ competence, employees’ capability to execute roles and accuracy of performance rating methods, it is important to employ a quantitative research approach as it is effective in studying a large number of people using a representative sample, before generalising the findings to a wider population as research results can be reproduced in line with the positivism meta-theoretical assumption on reliability (Sekaran 2006; Weber 2007). Moreover, the quantitative approach allows the researcher to collect primary data using formal instruments (questionnaires), which are relatively quick to administer and cost-effective when applied using online protocols (Yilmaz 2013).

Types of questionnaires & choice

Available methodology scholarship demonstrates that questionnaires are essentially “fixed sets of questions that can be administered by paper and pencil, as a Web form, or by an interviewer who follows a strict script” (Harrell & Bradley 2009, p. 6). Rattray and Jones (2007, p. 235) acknowledge that questionnaires are used “to enable the collection of information in a standardised manner which, when gathered from a representative sample of a defined population, allows the inference of results to the wider population.” Consequently, it is important to have adequate knowledge on the different types of questionnaires to allow for the selection of the most effective type for use in this study.

There are three types of questionnaires, namely “structured questionnaires, semi-structured questionnaires, and unstructured questionnaires” (Polonsky & Waller 2010). Structured questionnaires are the bedrock of large quantitative surveys (anything over 50 interviews) and share the following characteristics: (1) the wording of the questions and the order in which the items are presented to the participant are predetermined, (2) most of the questions contained in the questionnaires have predefined responses, hence provide minimal latitude for a study participant to stray beyond them, (3) the questionnaires are often used where it is possible to directly anticipate the possible responses, and (4) the questionnaires are often administered via telephone, face-to-face, or self-completion approaches (Polonsky & Waller 2010). On their part, semi-structured questionnaires use a mixture of questions with predefined responses as well as items that provide participants with the latitude to respond freely according to their own wishes. Although questions in the semi-structured questionnaire are asked in the same way, it is evident that this type is more flexible than the structured questionnaire as it does not only provide participants with the opportunity to express themselves much more deeply, but also enables the researcher to probe further in an attempt to find out the reasons for certain responses (Polonsky & Waller 2010). Lastly, the unstructured questionnaires are informal to the extent of allowing the researcher to employ a check list of questions rather than predetermined questions and responses. Accordingly, unstructured questionnaires provide the researcher with a lot of latitude to select the most appropriate channel of questioning and recording the responses (Barnham 2012).

Drawing from this elaboration, it is evident that the best questionnaire for use in the present study is the structured questionnaire, as it is better placed to use a Lickert-type scale to measure the attitudes, beliefs, and intentions of employees on the various components of performance appraisal under study (Rattray & Jones 2007). Additionally, the researcher intends to survey a large sample of participants, hence the use of the structured questionnaire is justified because it is a lot easier and less costly to collect data from a large sample using predetermined questions and responses, and also to generalise the study findings to a wider population (Fowler 2008). Moving on, the researcher intends to collect numerical data that can be easily analysed through available statistical software packages such as SPSS and STATA to provide responses to the key research questions. Unquestionably, structured questionnaires provide the right context for objective, numerical data to be collected with much ease from the field (Sekaran 2006). Lastly, structured questionnaires are easier to administer, not mentioning that they increase the level of consistency of responses due to employment of pre-coded responses, significantly reduce bias that is often caused by the characteristics of the interviewer and variability in interviewer skills, and require low training costs for the person administering them (Rattray & Jones 2007; Polonsky & Waller 2010; Barnham 2012).

Attributes of a good questionnaire

An effective questionnaire must always ensure that the researcher is able to collect objective raw data from the field, which can then be used to conduct statistical analyses in line with the quantitative research tradition (Siniscalco & Auriat 2005). Most of the attributes of a good questionnaire relate to the formatting and ordering of questions to provide a balanced playing field for all participants in a study. Available literature demonstrates that questions in the data collection instrument should be easily understood, clear to a point of eliciting only one interpretation from the participants, and must not contain words of vague meaning (Polonsky & Waller 2010). Additionally, the questionnaire should not be too long and must be developed in a way that reduces bias in responses. Lastly, the questions contained in the questionnaire should be capable of having a precise answer, not mentioning that they must demonstrate the capacity to provide responses that directly answer the key research questions or test the study hypotheses (Sekaran 2006).

It is important to note that most of these attributes have been safeguarded in this study through a rigorous questionnaire design and development exercise. For example, vague and ambiguous items have been addressed through piloting the questionnaires and reconstructing various words and phrases based on participant responses. Available literature demonstrates that “the generation of items during questionnaire development requires considerable pilot work to define wording and content” (Rattray & Jones 2007, p. 237). Additionally, the researcher has expended a lot of time and effort to standardise the questionnaires with the view to ensuring that each study participant is exposed to the same items and the same system of coding responses. Consequently, the revised questionnaires can guarantee adequate reliability and validity, as standardisation has ensured that the differences in responses to the items contained in the instruments can only be interpreted as reflecting differences among respondents, rather than variations in the processes used to generate the responses (Siniscalco & Auriat 2005). Lastly, the researcher has consulted a number of professionals in the domain of performance appraisal and reviewed associated literature with the view to reducing biases in the questionnaire items and strengthening the instruments’ content validity, which in turn ensure that the data collected from the field have the capacity to provide responses to the study’s three research questions (Rattray & Jones 2007).

Information required/content

Since the questionnaire is largely a measurement instrument, researchers should always strive to hinge its main purpose on operationalising the participant’s information demand into a format or layout which allows for statistical measurement (Brancato et al 2006). Consequently, the process of questionnaire design and development requires the researcher to make a well-informed decision on the information sought through the administration of the questionnaire (Rattray & Jones 2007). This requirement has necessitated the researcher to review the existing literature on performance appraisal and similar research topics not only to identify the gaps in the literature, but also to prevent duplication of knowledge. The findings demonstrate that there are a lot of studies on goal alignment in performance appraisal systems (e.g., Ayers 2013) as well as performance appraisal satisfaction, perceived appraisal purpose, appraisal fairness, goal setting and job satisfaction (Sudarsan 2009; Selden & Sowa 2011; Krats & Brown 2013); however, there appears to be a dearth in the literature on employee satisfaction with the appraisal system as well as employee satisfaction with performance appraisal ratings (Krats & Brown 2013). The design of the questionnaire has taken into consideration these gaps in the literature by including whole sections on accuracy of ratings and overall satisfaction with performance appraisal.

Defining the target respondents

Designing a questionnaire requires the researcher to not only determine the sample population, but also to establish the characteristics of the respondents (e.g., age and education level) as such features affect the type of questions included in the instrument (Denscombe 2009). In this study, the researcher has made use of previous research studies to define the parameters of the target respondents. Findings demonstrate that studies such as those of Ayers (2013) and Selden and Sowa (2011) use employees or specific performance appraisal programs as their units of analyses, not mentioning that they demonstrate the advantages of enrolling a big sample size to ensure the results can be generalized to a wider population. Drawing from this findings, the process of questionnaire design and development has taken into consideration the fact that the unit of analysis entails employees of the organisation rather than the specific performance appraisal program/system, as the study is interested in investigating the attitudes and perceptions of employees toward performance appraisal.

Choosing the methods of contacting respondents

Available literature demonstrates that the methods employed by the researcher to contact participants in order to collect data from the field (e.g., self-completed/self enumeration, personal interviews, telephone interviews) not only affect the type of questions included in the questionnaire, but also influence the way in which the items are phrased and ordered (Brancato et al 2006; Statistics Canada 2010). In realisation of the fact that the questionnaire is self-completed/self-enumerated, the researcher has ensured the inclusion of self-help instructions and an easy-to-follow structure to guarantee that respondents can complete it without relying on the input or direction of the researcher. Additionally, a telephone number is included to assist respondents to request for assistance in completing the questionnaire if they experience challenges, not mentioning that the questionnaire has a more elaborate visual presentation to induce respondent participation.

Objectives of questionnaire items

Every item included in the questionnaire should have the capacity to achieve a distinct objective, which in turn aids in addressing the study’s main objectives and key research questions (Rattray & Jones 2007). The researcher has ensured that all the questions included in the questionnaire have the capacity to achieve related objectives by:

- reviewing available literature to ensure adequate understanding of what the study intends to find out,

- desisting from including items that do not add value to the purpose of study,

- developing a prior understanding of how each item will be analysed to achieve the stated objectives,

- ensuring the items are reasonable in the participants’ eyes,

- exercising a selective and realistic orientation in developing the parameters for the information needed to address the study objectives and key research questions (Taylor-Powell 1998).

Number of questions

The number of questions contained in a questionnaire should have the capacity to address the study’s objectives and answer the key research questions; however, caution should always be taken to ensure all the items can be completed within comfortable limits and hence not necessarily exhaust the respondents (Rattray & Jones 2007). Available literature also demonstrates that a researcher should aim to categorize the questions according to common themes or subject areas to ensure consistency and reduce errors that may arise when respondents provide their feedback (Statistics Canada 2010). These elaborations have informed the researcher’s decision to design questions according to the specific categories that will assist in addressing the study’s main objectives and key research questions. Overall, the categories used in the questionnaire include personal/demographic, performance appraisal goals, accuracy of rating, as well as overall satisfaction with performance appraisal. Each of these sections contains 5-6 questions that have been designed to capture responses that are of critical importance in addressing the study’s objectives and key research questions.

Question length

Available scholarship on questionnaire design underscores the importance of keeping the questions in the questionnaires as short as possible to ensure a high response rate (Mortel 2008; Lietz 2010). These authors acknowledge that long questions decrease respondents’ comprehension of the tasks, which in turn minimises the possibility of receiving quality responses. These concerns have been taken into consideration in designing the questionnaire for this study, as the researcher has not only carefully evaluated the instrument to eliminate items that do not directly relate to the objectives of the study, but also taken the initiative to avoid questions that are too demanding and time consuming.

Grammar

An effective questionnaire should be easily understood by respondents and also designed in such a manner that minimises grammatical complexities to the lowest level (Thayer-Hart et al 2010). Moreover, as acknowledged by these authors, the communication should be actively oriented and free from any possessive forms of language. In keeping up with these requirements, the researcher has consulted other standardised questionnaires on the topic to have a feeling of how they have been grammatically structured to ensure optimal effectiveness. Additionally, the researcher has used peers to check the questionnaire instrument for spelling and grammatical mistakes, readability and flow, as well as consistency with the study’s objectives and research questions.

Eliminating socially desirable responses

Socially desirable responding (SDR) denotes a phenomenon whereby participants enrolled into a research study fail to respond truthfully and instead provide responses that make them look good in the eyes of the researcher, hence introducing extraneous variation in scale scores which, in turn, compromises the validity of the survey (van de Mortel 2008). Specifically, “social desirable responses are answers that make the respondent look good, based on cultural norms about the desirability of certain values, traits, attitudes, interests, opinions, and behaviours” (Steenkam, de Jong, & Baumgartner 2009, p. 2). According to these authors, self-favouring response tendencies can be best understood either in terms of agency-related contexts involving variables such as dominance, assertiveness, independence, control, masterly, uniqueness, power and socioeconomic status, or in terms of communion-related contexts involving variables such as membership, belonging, intimacy, love, connectedness, endorsement and nurturance.

In cognisance of the fact that SDR is facilitated by how the questions are designed and conceptualised, the researcher has taken adequate caution aimed at not only removing all response categories that may encourage socially desirable responses (e.g., “I do not know” response categories), but also ensuring that the applied meaning of the various questions is easily understood by respondents. Additionally, the researcher has taken adequate care in reframing questions that may appear socially sensitive to minimise the likelihood of respondents providing self-deceptive information to conform to perceived socially acceptable values. Lastly, the researcher has ensured that questions are designed in a way that does not put pressure on the respondents to conform in any particular way based on social or professional expectations (van de Mortel 2008).

Specificity and simplicity

Researchers have emphasised the need to use specific terms rather than general terms in the development of questions to minimise the cognitive load on respondents, and also to break down more intricate questions into simpler ones to increase respondent comprehension as well as response rates (Lietz 2010; Statistics Canada 2010). The researcher has ensured the specificity and simplicity of questions in the present data collection instrument by, among other things:

- avoiding the use of words that indicate vagueness (e.g., ‘maybe’, ‘probably’, or ‘perhaps’),

- decreasing the level of abstraction in questions by narrowing down their scope,

- providing specific behavioural illustrations of certain concepts used in the questionnaire to enhance comprehension,

- avoiding to request respondents to provide qualified judgements while responding,

- avoiding the use of hypothetical questions related to the respondents’ future attitudes or behaviours,

- avoiding to include questions that ask respondents to recall events that occurred in the distant past.

Developing the wording of questions

In question wording, the standard practice is to desist from employing “complex, technical terms, jargon, and phrases that are difficult to understand” (Thayer-Hart et al 2010, p. 8). Indeed, the wording of questionnaire items can alter survey findings and provide erroneous data if respondents:

- do not understand what the words in a question mean,

- interpret the items differently than intended,

- are unfamiliar with the concept(s) conveyed by the wording of a question (Statistics Canada 2010).

In realisation of these challenges, the researcher has not only used simple, everyday words in the development of questions to guarantee that all terms/phrases are appropriate for the population being surveyed, but also curtailed the use of acronyms and abbreviations to ensure clarity of wording and ease of comprehension. Additionally, the researcher has exposed the data collection instrument to a peer review with the view to identifying and changing words that may present difficulties to respondents during the actual data collection exercise. Lastly, adequate care has been taken to be specific and to avoid double-barrelled and leading questions to ensure the wording of questions delivers the same meaning for all respondents as that intended by the researcher.

Meaningful order of questions

Poor ordering of questions has been found to “not only threaten the validity of the results but also the generalisability of results to the population” (Lietz 2010, p. 256). The standard practice is to start with more general and less complex questions toward more specific and highly specialised items, as such ordering will provide respondents with an opportunity to familiarise themselves by responding to simpler elements before attempting to respond to demanding items (Denscombe 2009). The researcher has used this justification to revise the first questionnaire and ensure that it commences with questions on personal/demographic information of respondents and proceeds from general to specific questions. However, the researcher has taken adequate caution to ensure that personal/demographic questions do not reveal the identities of respondents, as any revelation can encourage negative feelings about the provision of demographic information impacting on respondents answering behaviour or participation (Lietz 2010).

Impact of order and direction of the Likert Scale

Available literature demonstrates that the arrangement of response categories in the Likert Scale may have an impact on the respondents’ perceptions due to a multiplicity of factors, including primary effects (assumption that respondents will incline toward earlier alternatives provided in the Likert Scale) and recentness effects (assumption that respondents will incline toward the later alternatives only after going through the alternatives). However, a well developed questionnaire should enable respondents to shift their frames of reference and select the most favourable response category regardless of its positioning in the Likert Scale (Denscombe 2009; Lietz 2010). Consequently, the task should be nested on ensuring that the questions and response categories in the Likert type scales are as clear and easily comprehendible as possible.

Drawing from this elaboration, the researcher has ensured that the questionnaire does not contain unusual topics, as such may present complexities to respondents and encourage them to respond to the questions based on primary or recentness effects. Additionally, the researcher has ensured that the questions and response categories are short and clear to facilitate comprehension. Overall, the researcher has placed the ‘strongly disagree’ response categories on the left side of the Likert Scale and the ‘strongly agree’ response categories on the right side of the scale, as available literature demonstrates that the direction of the various response categories does not have a substantial impact on respondents as long as the categories are assigned corresponding numerical weights (Dawes 2008). With this justification, the researcher has used the psychological reinforcement on what ‘disagree’ and ‘agree’ may actually imply to respondents to assign a numerical value of ‘1’ to the ‘strongly disagree’ response category and a numerical value of ‘5’ to the ‘strongly agree’ response category.

Questionnaire reliability and validity

Questionnaire reliability is defined as the degree to which the data collection instrument is able to generate the same findings on repeated trials, while questionnaire validity is defined as the degree to which the instrument is able to measure what it purports to measure (Sekaran 2006). Drawing from this description, it is important to mention that reliability of the present questionnaire has been ensured by (1) standardising the instrument, (2) ensuring all questions are properly worded and easily understood, (3) ensuring the various response categories are rightly coded according to the corresponding numerical weights, and (4) ensuring the stability of the different measures used in the questionnaire (Bryman & Bell 2008).

There are three extensive categories of validity in quantitative research studies, namely “(1) measurement validity (i.e., content and construct validity), (2) design validity (i.e., internal and external validity), and (3) inferential validity (i.e., statistical conclusion validity)” (Venkatesh et al 2013, p. 32). In this research, the questionnaire’s content validity has been established by pilot-testing the instrument to ensure the different categories of evaluation represents all the components that are needed to adequately answer the study objectives and key research questions. Additionally, the researcher has established construct validity not only by undertaking a review of related literature to have adequate understanding of all the focal constructs, but also ensuring that the number of items included in the instrument are adequate and are piloted to ensure consistency. Lastly, the questionnaire’s design validity (internal and external) has been established by (1) ensuring the relevance, appropriateness, and representativeness of items in the instrument through piloting, (2) comparing the standardised instrument to other similar validated measures of performance appraisal, and (3) establishing the correct operational measures for the theoretical concepts and variables under examination through successfully linking the questionnaire items and measures (e.g., Likert-type scales) to the study’s main objectives and research questions (Sekaran 2006; Bryman & Bell 2008).

Ethics

Available literature demonstrates that ethics in research contexts refer to “the appropriateness of your behaviour in relation to the rights of those who become the subject of your work, or are affected by it” (Saunders, Lewis, & Thornhill 2007, p. 178). Ethical issues arise in using the questionnaire to collect primary data from the field as the researcher is expected to “ensure that no harm occurs to these voluntary participants and that all participants have made the decision to assist after receiving full information as to what is required and what, if any, potential consequences may arise from such participation” (Polonsky & Waller 2010, p. 68). Consequently, in the present study, the researcher will make adequate arrangement to (1) seek for written permission from the senior management of the organisation(s) that will avail the respondents for data collection, (2) ensure that any participation in the research process is voluntary and that all respondents are provided with adequate information to make informed decisions without coercion or deception, (3) ensure respondents fully understand what they are requested to do and are fully informed of any potentially adverse consequences related to such participation, and (4) guarantee individual confidentiality and anonymity of respondents (Gregory 2003; Polonsky & Waller 2010).

Pilot testing

Lewis and Slack (2007) assert that pilot testing, which is defined as the process of administering preliminary questionnaires to a selected group of typical respondents, is an integral element in the construction of a questionnaire as it not only provides researchers with an important insight into the ease or difficulty associated with completing the instrument, but also aids in the identification of any unclear concepts and in making the questionnaire user-friendly. Through pilot testing, the researcher is in a position to eliminate issues that might affect the reliability of the study, such as instrument or interviewer bias.

The initial questionnaire developed for use in this study was piloted using on 20 participants who were not part of the actual sample for the study. The piloting exercise was intended to achieve various objectives, which include:

- ensuring each question measure what it is supposed to measure,

- ensuring all the words are clearly understood,

- ensuring that all respondents interpret the various questions contained in the instrument in the same way,

- ensuring all response categories are appropriate,

- ensuring the range of response categories is effectively used,

- ensuring respondents are able to follow the directions in the instrument,

- ensuring the instrument’s layout and wording create a positive impression that motivates individuals to respond, identifying the time required to complete filling the instrument,

- ensuring the instrument is able to collect pertinent data that would provide the needed responses to the key research questions.

- The analysis of the piloting exercise is included in the reflection section.

Data Analysis

Descriptive statistics were employed by the researcher to analyse the arising data from the piloting exercise involving 20 respondents and identify any areas that may need to be changed to ensure the instrument is able to provide adequate responses to the study’s objectives and key research questions and research questions. The key highlights of the piloting exercise are discussed below. Again, the difficulties experienced in analysing the data using descriptive statistics are included in the reflection section.

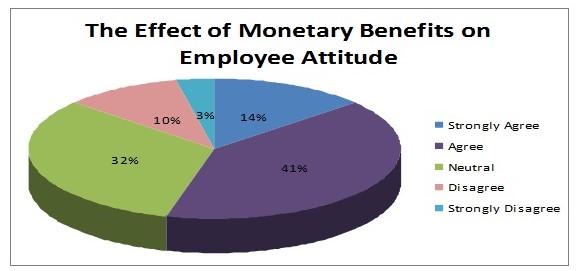

The questionnaire results indicated that, in general, employees demonstrated positive attitudes toward performance appraisal and the process usefulness. There was an important relationship between the monetary benefits and employees’ attitudes toward performance appraisal. 41% of the respondents agreed that the most important thing they expect from the performance appraisal was monetary benefits, 32% were neutral, and 14 % strongly agreed on the importance of monetary benefits; thus, the majority showed their concern with monetary benefits and their effect on performance appraisal. However, it should be emphasised that employees tendency to link salary increase and promotions to the performance appraisal reduces the validity of the performance appraisal system itself.

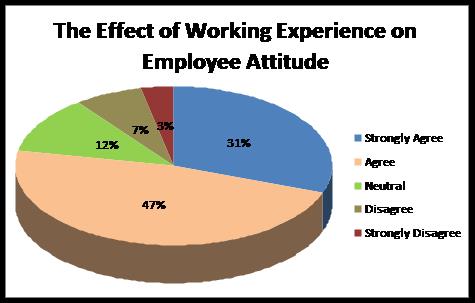

There was a negative relationship between employee working experience and his/her attitudes toward performance appraisal. Frequency tabulations demonstrated that 47% of respondents who have a work experience of 5-10 years showed negative attitudes towards the assessment system; that is, they perceived the system as not able to measure their real performance. Moreover, 31% of employees with a work experience of more than 10 years strongly agreed with the negative relationship between working experience and employee attitudes toward performance appraisal. Thus, it can be concluded that the more experienced the employee according to his/her work experience, the less likely it is for him/her to be satisfied with the assessment process.

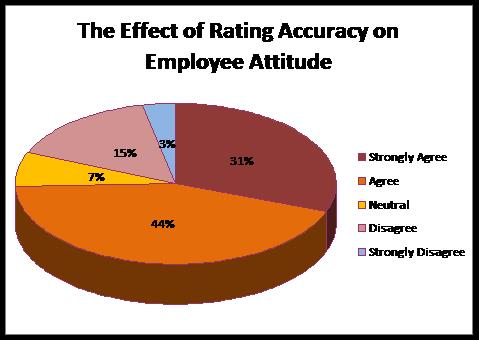

There was a noted relationship between the accuracy of rating and the employee attitudes toward the appraisal system. Indeed, 44% of respondents agreed that the accuracy of rating influenced their attitudes toward performance appraisal, while 31% strongly agreed that the effect of the rating accuracy on employee attitudes toward the appraisal is significant. Another 15 % of the respondents disagreed with the assertion that rating accuracy actually influenced employee attitudes toward the appraisal system. Overall, it can therefore be concluded that respondents who believed that the rating system was inaccurate demonstrated negative attitudes toward the performance appraisal system.

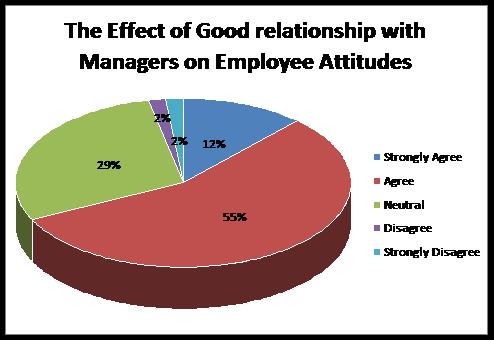

As far as the effect of manager’s competence on the employee attitudes toward performance appraisal is concerned, no significant relationship was found. Employees were predisposed to associate their attitudes toward the appraisal system to their good relationship with the manager rather than to the manager’s competence. Thus, 55% of respondents agreed that their good relationship with the superiors affects their attitudes toward the appraisal system, whereas 12% strongly agreed on the positive relationship between the mentioned variables, as demonstrated in the figure below.

Lastly, it should be emphasised that the data obtained from the piloted questionnaire showed similarity of responses among employees working at the same department. They revealed comparable attitudes toward the performance appraisal. Moreover, it was noted that good manager-employee relationship had a tendency to influence the assessment.

Reflection

Reflection after Peer Review & Instructor’s Review

The issues raised in peer and instructor’s review relate to the use and use of the Likert Scale, question wording, design of response categories, as well as the efficacy of the various items. In terms of Likert Scale, I must say that its use was informed by its use in measuring employees’ attitudes and satisfaction with the performance appraisal system (Bryman & Bell 2008), as well as its demonstrated ease in quantifying the various responses for onward computation using available mathematical analysis procedures and in line with a quantitative research approach (Sekaran 2006).

Additionally, issues were raised on justification used in ordering the response categories of the Likert Scale. Upon consulting a number of topics on the issue, I came to a conclusion that the order of response categories does not actually matter as long as the categories are correctly aligned with their respective measurement values. Available literature demonstrates that a well developed questionnaire should enable respondents to shift their frames of reference and select the most favourable response category regardless of its positioning in the Likert Scale (Denscombe 2009; Lietz 2010). Drawing from this elaboration, I have decided to place the ‘strongly disagree’ response categories on the left side of the Likert Scale and the ‘strongly agree’ response categories on the right side of the scale, as is documented the direction of the various response categories does not have a substantial impact on respondents as long as the categories are assigned corresponding numerical weights (Dawes 2008). In the following sections, the review is discussed according to the main categories included in the questionnaire.

Personal data section

Here, concerns were raised on whether to place the section at the beginning of the data collection instrument or at the end. After the piloting exercise, I decision was made to include the section at the beginning of the questionnaire not only to follow the standard practice of starting with the more general questions toward specific questions, but also to provide respondents with an opportunity to first familiarise themselves by responding to simpler elements before attempting to respond to demanding items (Denscombe 2009; Lietz 2010).

Also, some words in the personal data section were changed and/or added in the personal data section to ensure they imply the same meaning and are easily understood to respondents. Some questions were reformulated and redesigned to ensure that they are able to capture what they are intended to capture and hence enhance the reliability of the instrument. Lastly, I was advised to replace the word “citizenship” with “nationality” to eliminate the confusion that may arise from the wrong choice of words. Upon careful consideration, I am now convinced that “nationality” is the best word to use as it denotes identification with a particular nation rather than the legal status to reside in a given country (Keller 2014). Individuals can apply to become citizens of multiple countries, but their nationalities can only be the home countries where they were born.

In the section on performance appraisal goals, a number of concerns were raised about the wording of various response categories. For example, in the question “What does your performance appraisal determine”, it was suggested that I change the second response from “salary” to “salary increment.” Upon reflection, I was convinced that the change was justified because it brings more focus to what the response is intended to measure. Additionally, it was suggested that I introduce the option of “other” in some of my multiple choice questions or ranking questions. This suggestion justified and the option was included in such questions as it provides respondents with the opportunity to note other responses that may be outside the perception of the researcher but still within the scope of the study (Sekaran 2006). Indeed, such open choice options elicit new knowledge which may be important in answering the key research or presenting new areas for research (Bryman & Bell 2008).

Performance appraisal goals

Here, concern was raised to separate the question that combined the elements of knowledge and expertise in stating that “knowledge and expertise are recognised and rewarded during the performance appraisal.” Upon revisiting the relevant literature, I saw it plausible separate the elements and form two questions instead of one since available literature is clear on the importance of avoiding double-barrelled questions in questionnaire design and development (Lietz 2010).

Accuracy of rating

Here, various concerns were raised about the wording and meaning of various questions. Specifically, I was advised to add some words and change others to make the questions more specific and ensure that they are able to measure what they were intended to measure. It was felt that some words were very general and hence the need to replace them with more specific words to ensure sufficient comprehension and strengthen the capacity to answer the study’s key research questions. Available literature is clear on the shortfalls of using general and vaguely-worded questions, including the inability to elicit the required responses and capacity to lower participant response rates (Lietz 2010).

Concern was also raised on whether to include all the five response categories in the Likert Scale or to remove the “neutral” response category as it is almost impossible to get a neutral answer where attitudes and perceptions are concerned. Indeed, I was advised to use YES/NO responses in some questions on the accuracy of rating. However, upon consulting a number of research studies on performance appraisal that have used Likert-type scales to measure employee attitudes and perceptions (e.g., Ayers 2013; Seldon & Sowa 2011), I saw no need to remove the ‘neutral’ response category as such a move interferes with the reliability of the measurement scale (Bryman & Bell 2008).

Conclusion

This paper has discussed many of the issues related to questionnaire design and development for use in a quantitative research study. From the discussion, it is evident that qquestionnaires constitute an important component in the process of conducting a research survey, and that their importance emanates from their ability to assist researchers in collecting data from the field. The paper has underscored the critical importance of ensuring that the questionnaire is valid and reliable in order to successfully respond to the study’s main objectives and key research questions. Additionally, the paper has underscored the need to follow the standard practices and processes in designing and developing a questionnaire, before engaging in an in-depth discussion on pilot testing and data analysis using descriptive statistics. Lastly, the paper has focused on reflecting through some of the concerns that have been raised by peer and instructor reviews, and also some of the considerations that have been come into the limelight after the questionnaire was piloted.

Reference

Ayers, RS 2013, ‘Building goal alignment in federal agencies’, Performance appraisal programs’, Public Personnel Management, vol. 42 no. 4, pp. 495-520.

Brancato, G, Machia, S, Murgia, M, Signore, M, Simeoni, G, Blanke, K…Hoffmeyer-Zlotnik, JHP 2006, Handbook of recommended practices for questionnaire development and testing in the European statistical system, Web.

Bryman, A & Bell, E 2008, Business research methods, 3rd edn, Oxford University Press, Oxford.

Denscombe, M 2009, Ground rules for social research guidelines for good practice, McGraw-Hill International, Maidenhead.

Gregory, I 2003, Ethics in research, Bloomsbury publishing, London.

Keller, EJ 2014, Identity, citizenship, and political conflict in Africa, Indiana University Press, Bloomington, Indiana.

Krats, P & Brown, TC 2013, ‘Unionised employees’ reactions to the introduction of a goal-based performance appraisal system’, Human Resource Management Journal, vol. 23 no. 4, pp. 396-412.

Lewis, M & Slack, N 2007, Operations management: Critical perspectives on business, Taylor & Francis, New York.

Lietz, P 2010, ‘Research into questionnaire design: A summary of the literature’, International Journal of Market Research, vol. 52 no. 2, pp. 249-272.

Polonsky, MJ & Waller, DS 2010, Designing and managing a research project: A business student’s guide, Sage Publications, Thousand Oaks.

Rattray, J & Jones, MC 2007, ‘Essential elements of questionnaire design and development’, Issues in Clinical Nursing, vol. 16 no. 3, pp. 234-243.

Saunders, M, Lewis, P & Thornhill, A 2007, Research methods for business students, 4th edn, Pearson Education Limited, Essex, England.

Sekaran, U 2006, Research methods for business: A skill building approach, 4th edn, Wiley-India, New Delhi.

Selden, S & Sowa, JE 2011, ‘Performance management and appraisal in human service organisations: Management and staff perspectives’, Public Personnel Management, vol. 40 no. 3, pp. 251-264.

Siniscalco, MT & Auriat, N 2005, Questionnaire design, Web.

Statistics Canada 2010, Survey methods and practices, Web.

Steenkamp, JBEM, de Jong, MG, & Baumgartner, H 2009, ‘Socially desirable response tendencies in survey research’, Journal of Marketing Research, vol. 56 no. 2, pp. 1-74.

Sudarsan, A 2009, ‘Employee performance appraisal: The (un) suitability of management by objectives and key result areas’, CURIE Journal, vol. 2 no. 2, pp. 47-54.

Taylor-Powell, E 1998, Questionnaire design: Asking questions with a purpose, Web.

Thayer-Hart, N, Dykema, J, Elver, K, Schaeffer, NC & Stevenson, J 2010, Survey fundamentals: A guide to designing and implementing surveys, Web.

Van de Mortel, TF 2008, ‘Faking it: Social desirability bias in self-report research’, Australian Journal of Advanced Nursing, vol. 25 no. 4, pp. 40-48.

Venkatesh, V, Brown, SA & Bala, H 2013, ‘Bridging the qualitative-quantitative divide: Guidelines for conducting mixed methods research in information systems’, MIS Quarterly, vol. 37 no. 1, pp. 21-54.

Appendix 1

Draft Questionnaire

Questionnaire on Employees’ Attitudes toward Performance Appraisal

This questionnaire is part of a Doctorate of Business Administration research project. It consists of twenty-two questions that are divided into four sections: personal data, performance appraisal goals, accuracy of rating, and overall satisfaction.

The questionnaire concerns itself with how the employee perceives and understands the appraisal process and whether, in his / her opinion, it is administered equitably. For this reason it is an important and real time concern that you complete this questionnaire objectively since this research is in fact part of the analysis of the issues confronting the organization and may input planning processes.

The information you provide will be confidential. Your anonymity will be assured and the results and analysis of the survey will be open to scrutiny.

Your contribution in no small measure will make this research possible.

Performance Appraisal Goals

How often is the performance appraisal given?

- Quarterly

- Twice per year

- Annually

- Other. Please specify_________________________________________

What does your performance appraisal determine? Please check all that apply.

- Work performance

- Salary

- Target achievements

- Promotions

- Training needs

- Employee objectives until the next appraisal

- Other. Please specify _______________________________________

What are the three most important things you expect from the performance appraisal? Place 1 beside the first most important, 2 beside the second most important, and 3 beside the least important.

- Promotional opportunities _______________________

- Monetary benefits _____________________________

- Training _____________________________________

- Ability to participate in decision making ____________

- Recognitions _________________________________

Knowledge and expertise are recognized and rewarded during the performance appraisal.

- Disagree

- Strongly Disagree

- Neutral

- Agree

- Strongly Agree

Accuracy of Rating

The supervisor clearly explains the performance appraisal procedure to be followed.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

The supervisor makes sure that the performance appraisal measures the employee’s performance.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

Having a good relationship with the supervisor influences the results of the performance appraisal.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

An employee can object to a performance rating if he/she thinks it is unfair.

- Strongly Disagree

- Disagree

- Agree

- Strongly Agree

An employee can discuss disagreements with his/her supervisor.

- Strongly Disagree

- Disagree

- Agree

- Strongly Agree

Overall satisfaction with Performance Appraisal

I am satisfied with the assessment of my performance.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I believe that the performance appraisal can help me improve my performance.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I think my organization should change the performance appraisal system.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I believe in the usefulness of performance appraisal in my job advancement.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I see the performance appraisal as a threat in my job advancement.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I have experienced follow up since my last performance appraisal.

- Yes

- No

If “Yes” please specify ___________________________________________________________________________

Personal Data

Department: _______________________________

Gender: ◊ Male ◊ Female

Check the region where you hold your citizenship:

- Gulf Cooperation Council (GCC) Countries

- Middle East

- Africa

- Asia

- Europe

- United States or Canada

- Central or South America

- Other. Please specify_______________________________

Check the category that includes your age.

- ◊ 18 – 24

- ◊ 25 – 34

- ◊ 35 – 44

- ◊ 45 – 54

- ◊ 55 – 64

- ◊ 65+

Years of work experience:

- ◊ Less than 1 year

- ◊ 1 – 5 years

- ◊ 5 – 10 years

- ◊ More than 10 years

Thank you for cooperation.

Appendix 2

Final Questionnaire

Questionnaire on Employees’ Attitudes toward Performance Appraisal

This questionnaire is part of a Doctorate of Business Administration research project. It consists of twenty-two questions that are divided into four sections: personal data, performance appraisal goals, accuracy of rating, and overall satisfaction.

The questionnaire concerns itself with how the employee perceives and understands the appraisal process and whether, in his / her opinion, it is administered equitably. For this reason it is an important and real time concern that you complete this questionnaire objectively since this research is in fact part of the analysis of the issues confronting the organization and may input planning processes.

The information you provide will be confidential. Your anonymity will be assured and the results and analysis of the survey will be open to scrutiny.

Your contribution in no small measure will make this research possible.

Personal Data

Job category:

- □Administrative

- □Faculty

Gender: □Male □ Female

Check the region where you hold your citizenship:

- Gulf Cooperation Council (GCC) Countries

- Middle East and North Africa (MENA)

- Africa

- Asia

- Europe

- United States and Canada

- Central and South America

- Other. Please specify_______________________________

Check the category that includes your age.

- □ 18 – 24

- □ 25 – 34

- □ 35 – 44

- □ 45 – 54

- □ 55 – 64

- □ 65+

Years of work experience:

- □ Less than 1 year

- □ 1 – 5 years

- □ 5 – 10 years

- □10-15 years

- □15-20 years

- □ More than 20 years

Performance Appraisal Goals

How often is the performance appraisal conducted?

- Quarterly

- Twice per year

- Annually

- Other. Please specify___________________________________

What does your performance appraisal determine? Please check all that apply:

- Work performance

- Salary increment

- Target achievements

- Promotions

- Training needs

- Employee objectives until the next appraisal

- Other. Please specify _______________________________________

What are the three most important things you expect from the performance appraisal? Place 1 beside the first most important, 2 beside the second most important, and 3 beside the third most important.

- Promotional opportunities _____________________

- Monetary benefits ____________________________

- Training ____________________________________

- Ability to participate in decision making ___________

- Recognitions ________________________________

- Other. Please specify __________________________

Work related knowledge is recognized and rewarded during the performance appraisal.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

Expertise is recognized and rewarded during the performance appraisal.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

Accuracy of Rating

The supervisor clearly explains the performance appraisal procedure to be followed.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

The supervisor makes sure that the performance appraisal measures the employee’s true performance.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

Being friends with the supervisor influences the results of the performance appraisal.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

An employee can object to a performance rating if he/she thinks it is unfair.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

An employee can discuss the results of the performance appraisal with his/her supervisor.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

Overall satisfaction with Performance Appraisal

I am satisfied with the assessment of my performance.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

Performance appraisal can help me improve my performance.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

My organization should change the performance appraisal system.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I believe in the usefulness of performance appraisal in my job advancement.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I see the performance appraisal as a threat in my job advancement.

- Strongly Disagree

- Disagree

- Neutral

- Agree

- Strongly Agree

I have experienced follow up since my last performance appraisal.

- Yes

- No

If “Yes” please specify: _________________________________________________________________________

Appreciation

I take this opportunity to thank you for the time you have taken to respond to the above questions. You have helped profoundly in the process of studying this topic and completing the research. It is essentially expected that these viewed will help to bring new intellect to this field especially when considering the challenges that are facing employees due to performance appraisal. Kindly, let me know whether you can help in further research on the same topic if I happen to have more questions by ticking either yes or no.

- □ Yes

- □ No

Also, in case you need more clarifications about my questions contact me through the following contact information provided in the last part.

Contact Information

Email: [email protected]

Phone Number: +974 66414288

Yours Respectively,

Aline Shatila.